In an era where precision, speed, and efficiency are paramount, the evolution of sensor technology stands at the forefront of innovation. As a pivotal player in this dynamic landscape, Inertial Labs has again pushed the boundaries with their next-generation Sensor Fusion Platforms.

Seamlessly merging multiple sensing technologies, these platforms promise enhanced accuracy, reduced drift, and a glimpse into the future of integrative, high-performance sensing solutions.

Harnessing the power of advanced algorithms and state-of-the-art hardware, Inertial Labs is setting a new gold standard for sensor systems. These platforms are not just about collecting data; they’re about making that data more meaningful, actionable, and reliable.

Moreover, as we stand on the precipice of a fourth industrial revolution—with the rise of smart cities, autonomous transportation, and interconnected IoT devices—these next-generation platforms by Inertial Labs are positioned to play a crucial role. By offering an unparalleled fusion of accuracy, efficiency, and adaptability, they are set to redefine our understanding of sensory data acquisition and processing, shaping the future of countless sectors and applications. Join us as we unpack the intricacies of sensor fusion platforms, exploring their potential to transform how we perceive and interact with the world around us.

Visually Enhanced Inertial Navigation System (Next Gen – VINS)

•The VINS (Visual Inertial Navigation System) consists of three core sub-systems that work in liaison to provide end-users with a tightly integrated sensor fusion platform that includes:

1. Air Data Computer (ADC) module

2. Sensor module

3. Processing module.

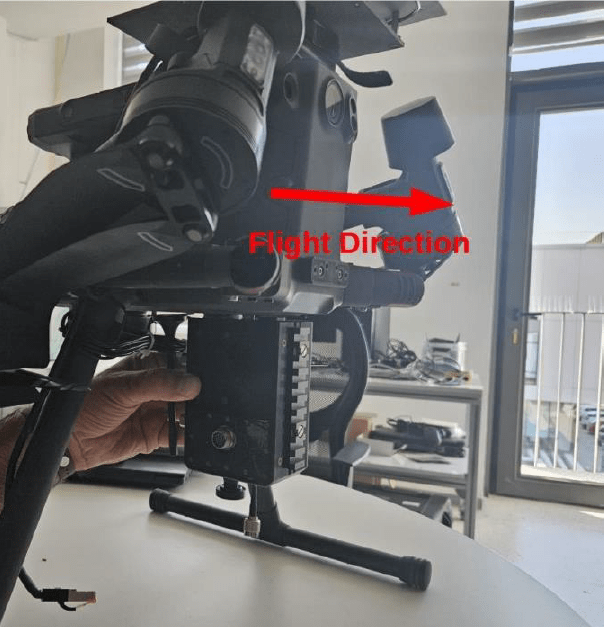

•The VINS product was divided into three main sub-systems due to the modularity of installation onto smaller unmanned aerial vehicles with size, weight, and power (SWAP) limitations.

•The product will house a visual odometry-based computer vision algorithm that can determine velocity and position updates purely by observation of raw camera imagery along with other aiding data parameters for enhanced GNSS-denied performance in tight integration with the INS.

Next-Gen VINS Modules

Next-Gen VINS Hardware I/O System Architecture

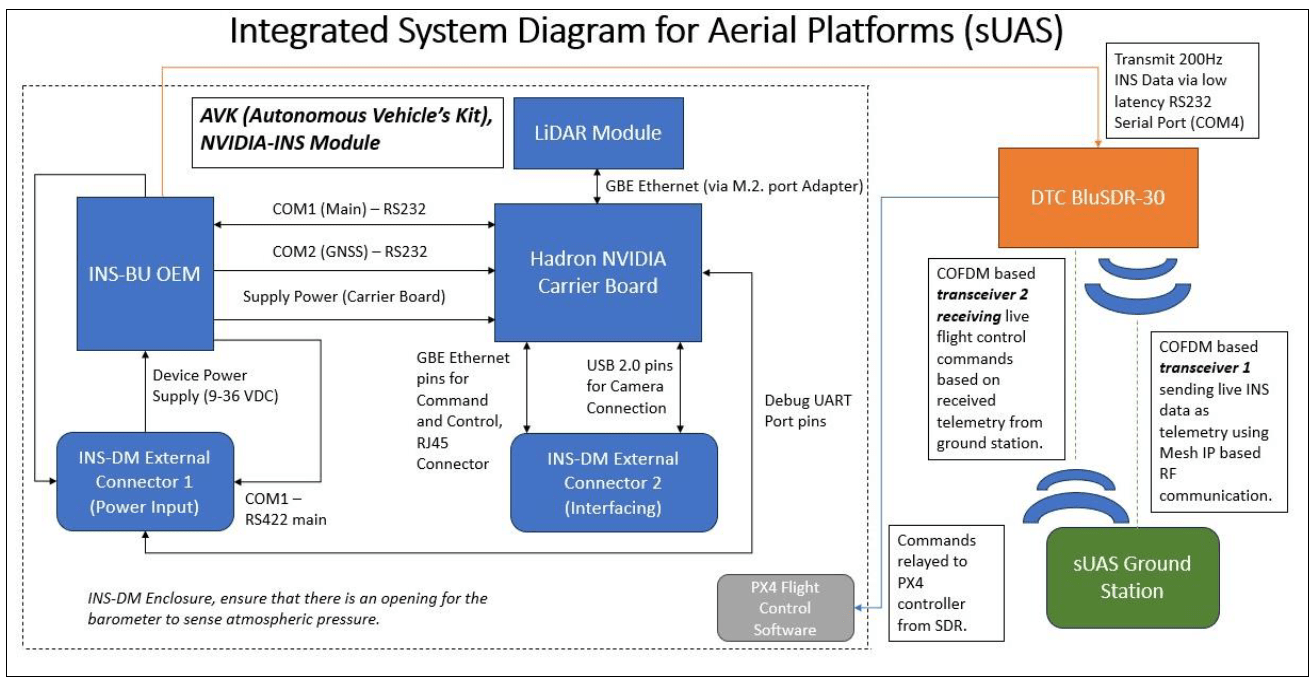

AVK (Autonomous Vehicle Kit) Module

• The developer’s INS-DM module houses a single antenna INS-BU OEM tightly integrated into an Nvidia Orin NX-based processor, allowing users to mount, deploy, and operate custom software with full access to the INS filter for accurate output of position, velocity, orientation, and time. The enclosure houses various interfaces to give users a holistic development environment for integrating various sources of aiding data and direct access to the INS.

•The AVK development environment allows users to interface with the following:

– Full resolution (360 degrees) LiDAR sensors

– Multiple Cameras

– Inertial Labs based INS

– FOG (Fiber Optic Gyroscopes) IMU’s

– External SAFG, ADC (Air Data Computers), Ground speed Radar, and other compatible aiding data sensors.

Autonomous Vehicle Kit I/O System Diagram

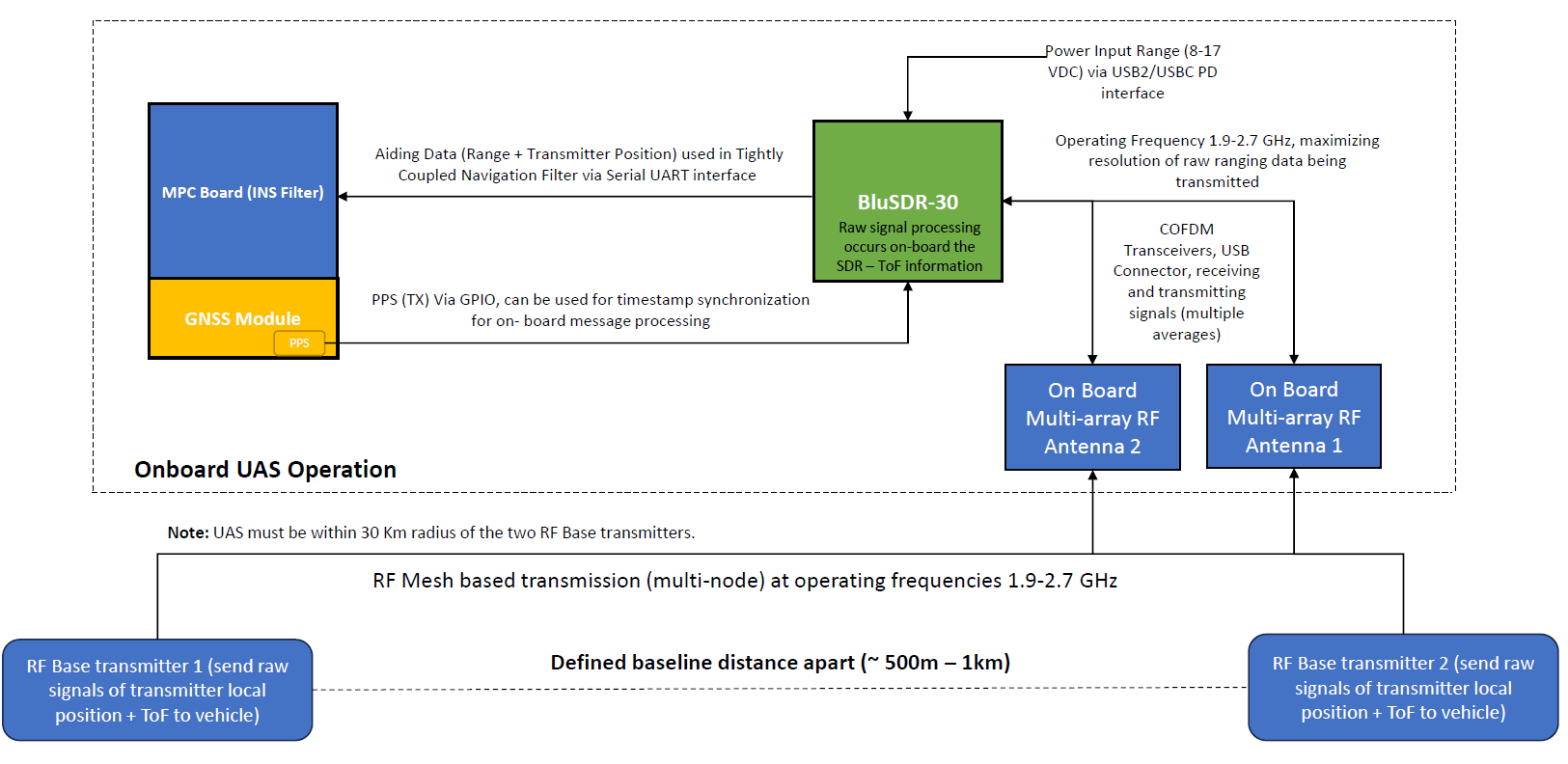

Concept of Operations – Using Mesh-based RF Radio Transceiver Network for Enhanced GNSS-Denied Aerial Navigation

Utilizing AVK Modules for Telemetry & Control Applications

•To expand upon the realms of how AVK modules (e.g., NVIDIA-INS) can become mission-critical systems, we showcase below the vision to be able to integrate with Pixhawk flight controllers and RF-based transceivers for supporting live telemetry and control operations from a ground station.

Components that UAS will mount include:

•AVK

•Mesh IP-based RF transceiver device

•Pixhawk Flight Controller

UAS can be within a 30 Km radial range around the ground station for active communication.

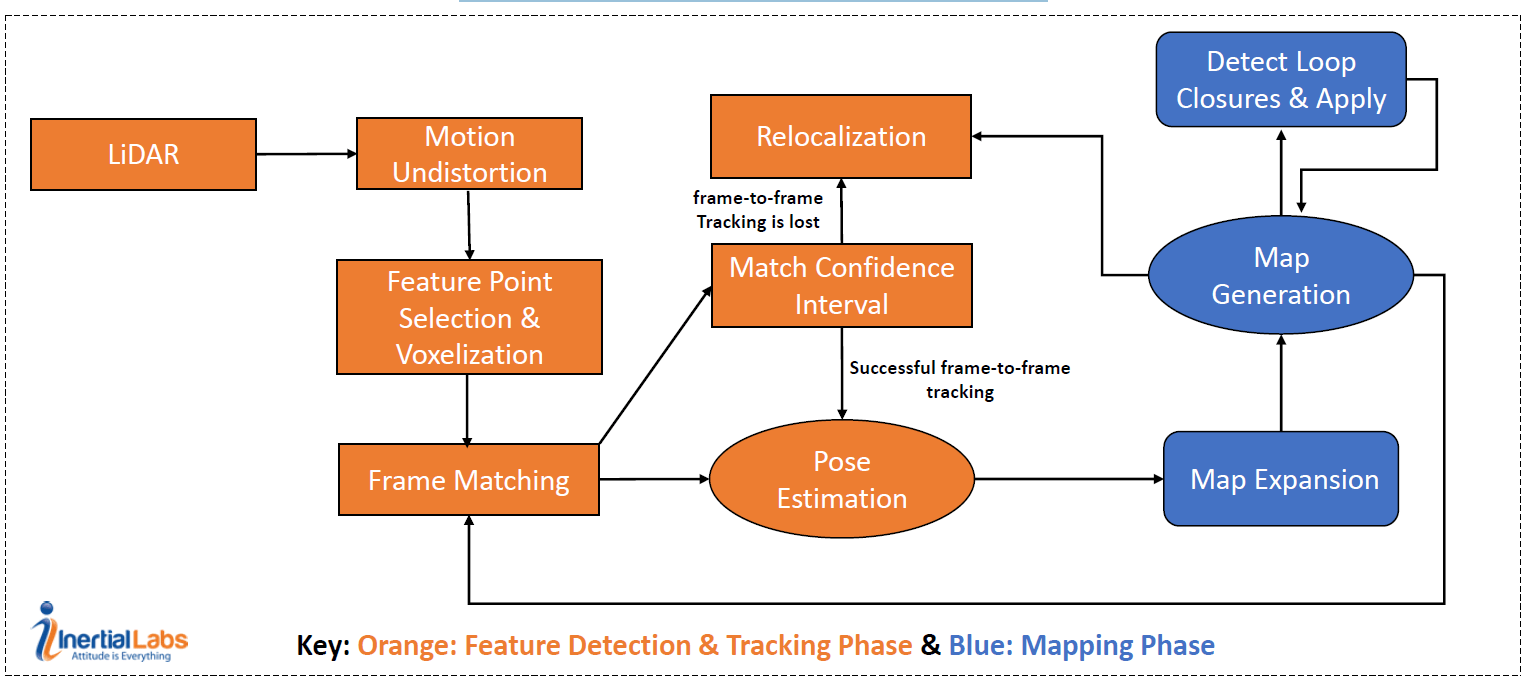

3D LiDAR-Based SLAM Algorithm Model

The diagram below showcases how Inertial Lab’s two-phase 3D LiDAR SLAM Solution is Implemented and segmented into multiple algorithmic processes.

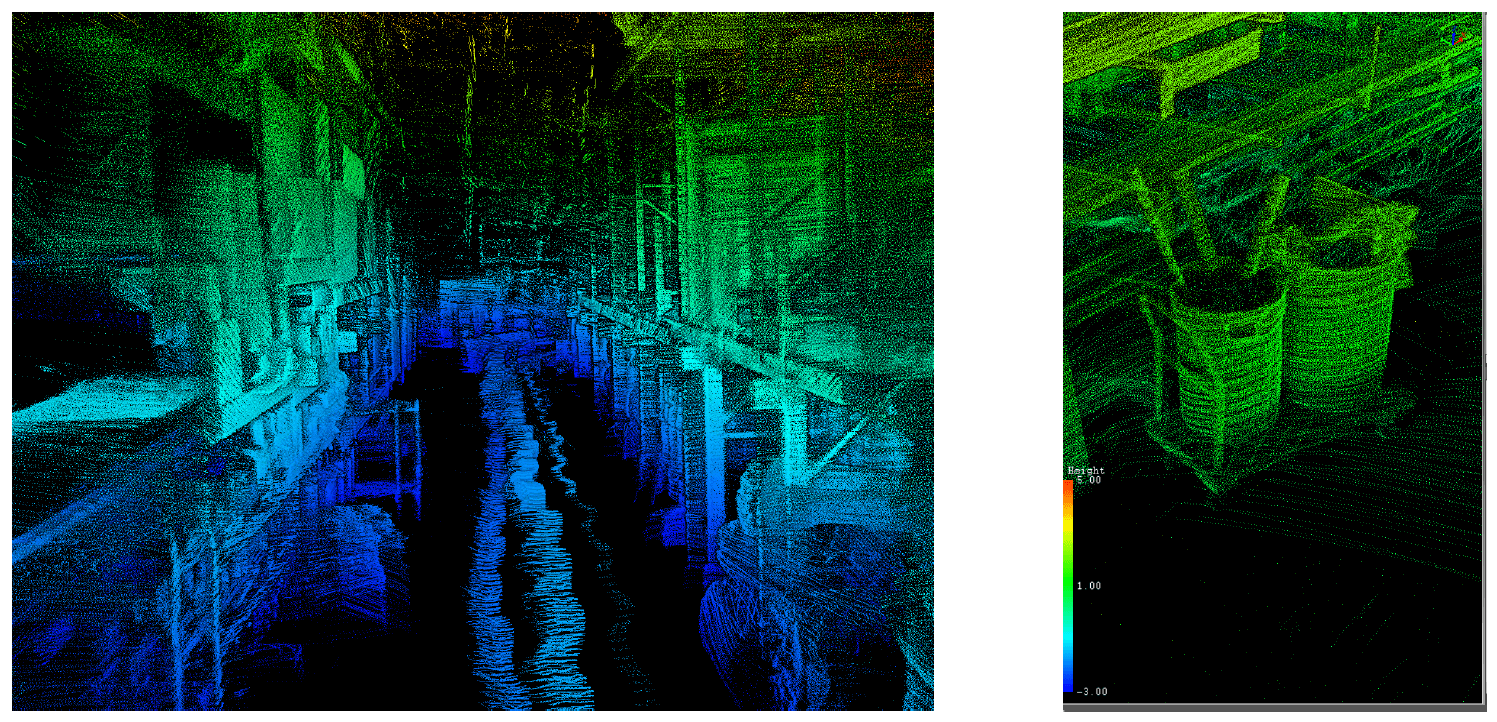

3D LiDAR SLAM Developments (Indoor Mapping)

The images above represent the LiDAR-based SLAM engine’s Indoor point clouds using Inertial Lab’s XT-32 RESEPI Unit. Scans were captured at a hardware department store.

3D LiDAR SLAM Developments (Outdoor Mapping)

The images above represent the LiDAR-based SLAM engine’s point clouds using Inertial Lab’s XT-32 RESEPI Unit. The point cloud represents the scan of an outdoor marketplace.

Inertial Labs’ advancements in sensor fusion platforms underscore the incredible potential and trajectory of modern sensing technology. Their commitment to merging precision with integrative data analysis sets a precedent for what’s possible in this rapidly evolving field. As industries and societies move towards a future heavily reliant on accurate, fast, and cohesive data interpretation, platforms like those developed by Inertial Labs will undoubtedly be instrumental. Beyond mere data collection, they represent a holistic approach to understanding and leveraging the world’s sensory inputs. The fusion of diverse sensors into a cohesive, unified system signifies more than technological progression—it symbolizes the harmonization of disparate data into a narrative that can guide decisions, innovations, and visions of the future. As we navigate this exciting epoch of technological innovation and as the boundaries of what sensors can achieve continue to expand, it’s evident that solutions like those from Inertial Labs will not just support but actively shape our journey toward a more interconnected, intelligent, and insightful future.

In conclusion, Inertial Labs’ next-generation sensor fusion platforms represent a significant leap forward in precision navigation and motion sensing technologies. These platforms offer unparalleled accuracy and reliability with advanced sensor integration and sophisticated algorithms. They are poised to revolutionize industries ranging from autonomous vehicles to robotics, aerospace, and virtual reality. These sensor fusion platforms enable more efficient and effective operations across various sectors by delivering precise, real-time data. As we continue to push the boundaries of what’s possible with technology, Inertial Labs stands at the forefront, providing innovative solutions that will shape the future of sensor technology.

Follow Inertial Labs for new and advanced technology!

For more information:

Anton Barabashov

VP of Business Development

Inertial Labs Inc.

il.sales@viavisolutions.com